OpenAI researchers warned about a potentially dangerous artificial intelligence discovery ahead of CEO Sam Altman being ousted from the company, according to reports.

Several staff members of the AI firm wrote a letter to the board of directors detailing the algorithm, two people familiar with the matter told Reuters. The disclosure was reportedly a key development in the build up to Mr Altman’s dismissal.

Prior to his return late Tuesday, more than 700 employees had threatened to quit and join backer Microsoft in solidarity with their fired leader.

The sources cited the letter as one factor among a longer list of grievances by the board leading to Altman’s firing, among which were concerns over commercialising advances before understanding the consequences.

The staff who wrote the letter did not respond to requests for comment and Reuters was unable to review a copy of the letter.

I don't buy it. From what I read the other day, even the original article didn't clarify the cause-effect connection between this claim and the ousting of the CEO.

Also, what was said was basically LLMs with math capabilities was around the corner. It solves the weakness of the current LLM, namely lack of logic in the model. That's not a threat on its own. It's just a personal anecdote yet. This employee might even just be a manager who has no idea how AI works. (That's my impression as a scientist).

To prove that it's a threat to humanity, that employee would have to do experiments because it's science. Note that ANY AI achievement has been hypothesized to be a threat to humanity by laymen. So far there's nothing to indicate that this employee analyzed anything deep to reach the conclusion.

Edit: it's also next to impossible for a language model with math capabilities to destroy humanity. It's popular fantasy unless you replace the US President with ChatGPT, for example.

To be fair, what the media has put out is nothing but a dumbed down simplistic description. Trying to deduce the actual threat here is not going to go well if you only go by the bullshit that has been reported.

Now having said that, I too feel there is a lot more to this

I have a feeling that danger is some weakness we already know of, but will never be outgrown by LLMs in the foreseeable future.

my level of confidence in their ability to handle this technology safely is exactly 0.00

It all fits. The OpenAI board was hired by Altman to keep an eye on ethics. I remember that a few months ago, Microsoft fired their entire AI ethics team because they apparently kept slowing the development down (despite that being the point). Now this OpenAI drama and the involvement of Microsoft…

They are all after the money bag.

I think it is not just their inability, it is all our inability. We can have some say, but no matter what, AGI or not, it is impossible to predict all the effects good and bad and thus impossible to manage. Just like when electricity was invented and rolled out. Who could have known all the good and bad things it would be used for, let alone prevent the bad. Impossible.

Several staff members of the AI firm wrote a letter to the board of directors detailing the algorithm, two people familiar with the matter told Reuters.

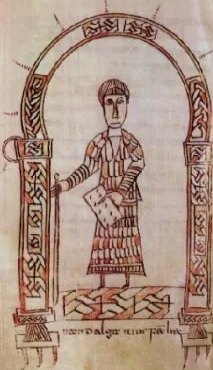

Was it these guys?

deleted by creator

Wow!