On my Fedora KDE install on 40, hibernate is now an available power option. The install has been in upgrade cycles since 35 at this point. I would imagine that barring different DEs showing different power options being a possibility, it is more on detecting hardware compatibility for functional hibernation.

- 0 Posts

- 14 Comments

Tiny 11 comes in two variants:

Tiny11 Core is not suitable for use on physical hardware as it outright disables updates. It’s best used for short-term VM instances.

Tiny11 also has problems with updates. The advantages gained through Tiny11 will erode with applying Windows updates. The installer is more tolerable than Windows 11 by not forcing an online account (but still needing to touch telemetry settings). Components like Edge and One drive will inevitably rebuild themselves back in with cumulative updates. If this is something that coerces you to not update your system, don’t subject yourself to using Tiny11. Additionally Tiny11 fails to apply some cumulative updates out of the box, which could be a further security risk.

I recently tested the main Tiny11 in a VM based on a different user recommending it in a now deleted thread. I was skeptical knowing the history of Tiny10 onward that 11 would actually be able to update properly, and NY findings backed up my initial skepticism of functional updates.

The A485 is actually such a terrible laptop. I would never reccomend such garbage to anyone considering mine almost never worked properly. I had in three years have six main board replacements for various hardware faults. Not a single of the boards has been free from severe hardware faults.

I have been utilizing BunkerWeb for some of my selfhost sites since it was bunkerized-nginx. It is indeed powerful and flexible, allowing multi-site proxying, hosting while allowing semi-flexible per-site security tweaks (some security options are forcibly global still, a limitation).

I use it on podman myself, and while it is generally great for having OWasp CRS, general traffic filtering targets and more built on top of nginx in a Docker container, the way Bunkerweb needs to be run hasn’t really remained stable between versions. Throughout several version upgrades, there have been be severe breaking changes that will require reading the setup documentation again to get the new version functional.

I just did some testing in the past hour or so and did a portable install from scratch using the Fedora Workstation 39 iso. I’m not exactly sure what your hardware detection issue would have been, but I can say that Anaconda could detect both a USB flash drive and an external hard drive I had plugged in.

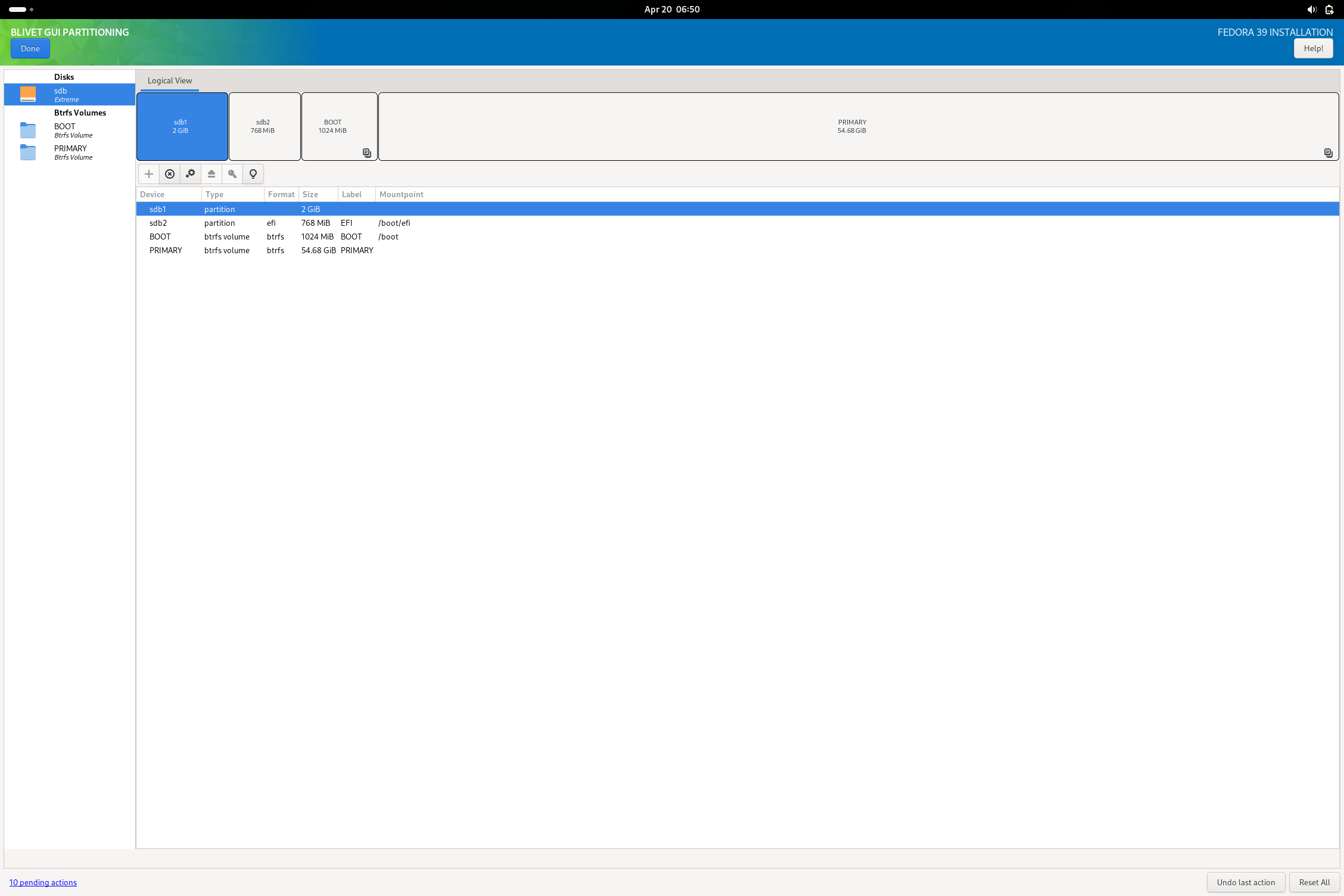

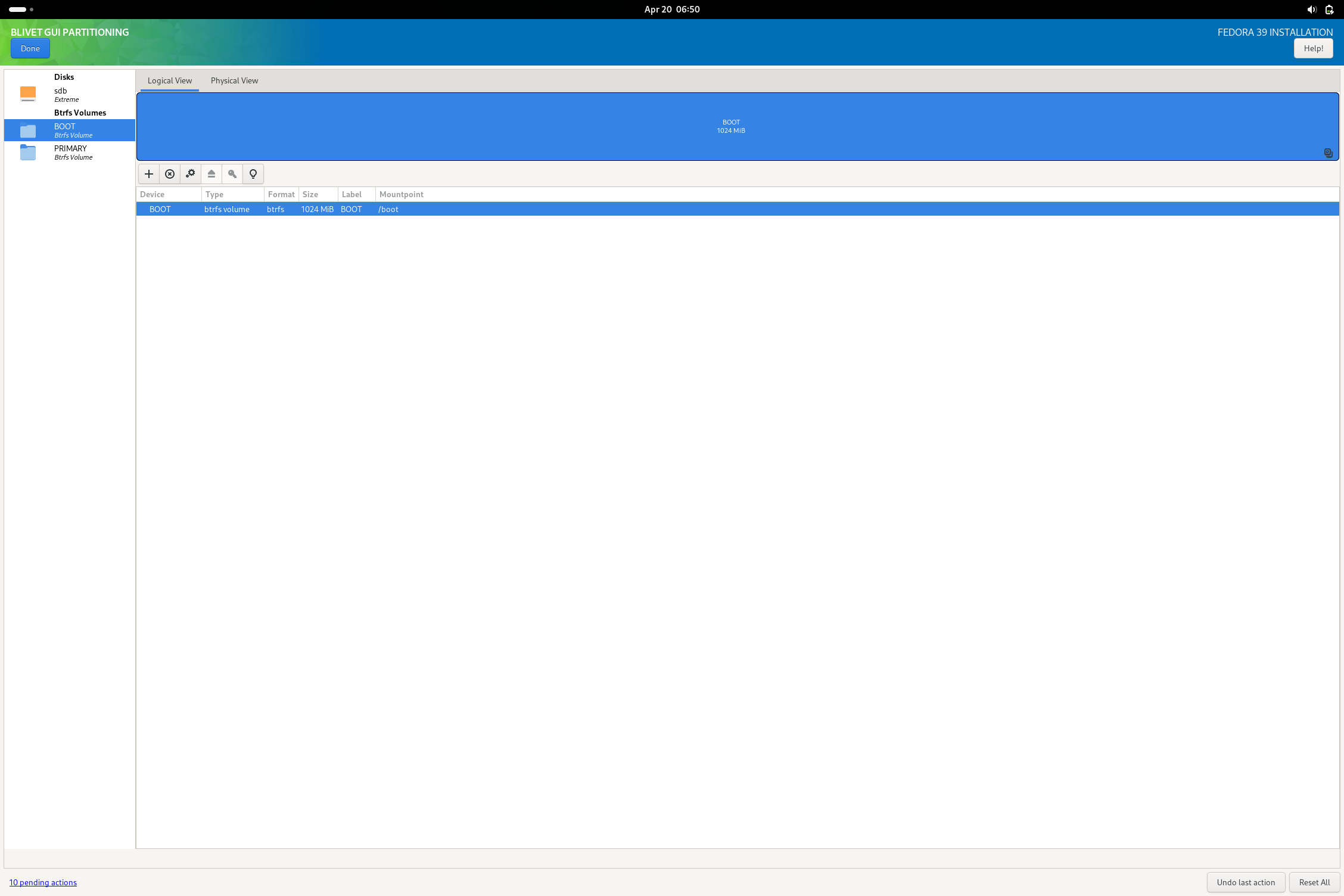

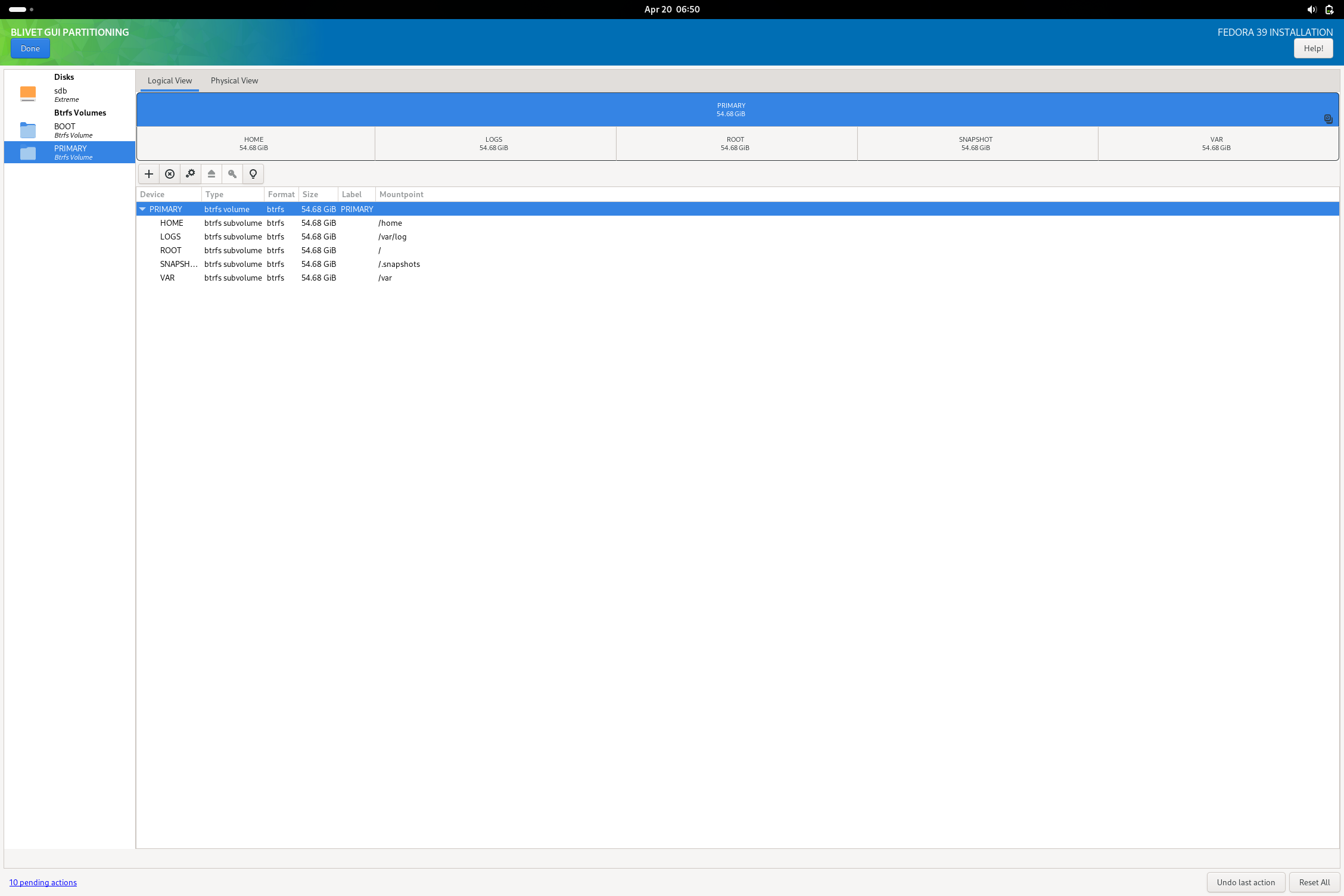

Going with the USB flash drive, I did skip using the automatic partitioning and went for using blivet to do my work. I did format the drive beforehand as I have always had issues with that drive properly accepting various partitioning commands (the installer no exception as tested). I did reserve a partition for a shared storage filesystem, but didn’t actually give it a filesystem here.

In blivet, here is a sample of the kind of partition schema I was talking about (something that might be helpful to OP or anyone else that wants to try this setup):

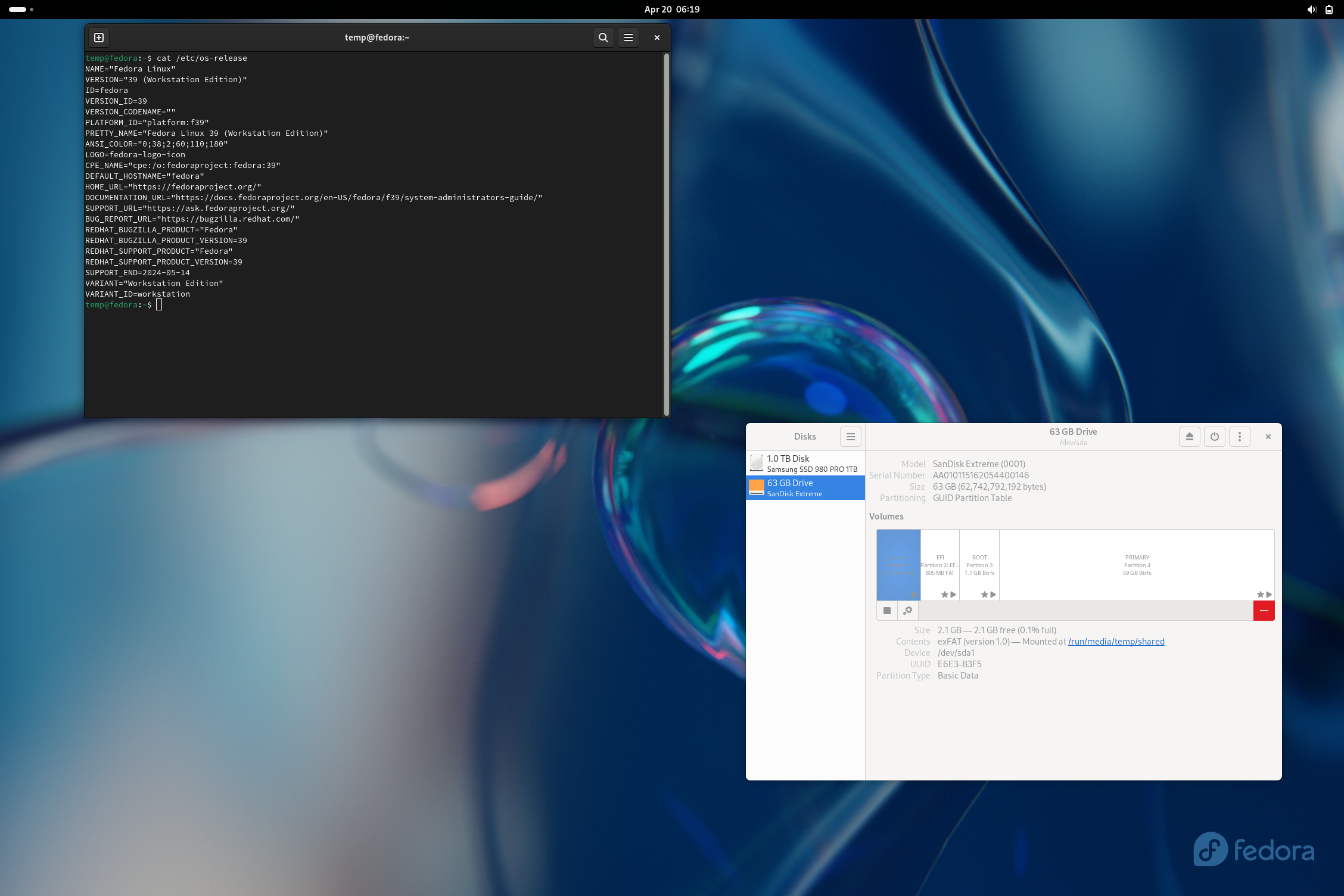

I was able to then complete the install as normal and boot into the finished USB drive. I noted a small non-fatal complaint from grub on boot, but I imagine this is fixed with updating the system. All systemd units boot without failure and I am able to get the system working with minimal issue. The only real issue I could note is that the installation is very sluggish (due to it being on a flash drive rather than an external ssd or some other more suitable media). After booting, I then opened Disks and added the missing exfat (or NTFS) filesystem I reserved a partition for. The reason I didn’t do this in blivet during install is because the option doesn’t actually exist to make an exfat fs in the tool.

Hopefully, this comment is helpful toward getting such a setup working.

EDIT:

Something I did notice with GRUB on Fedora Workstation 39 is that by default, the menu will not show unless pressing escape on boot. There is a useful AskUbuntu post that explains in detail how to access the grub menu and how to change it to behave in a better fashion for a multiboot system.

Ah, that would put a bit of complication into things. If you want to actually accomplish this though, you should largely start with the same steps as a standard system install, using a second USB flash drive to write the distro onto the external SSD, leaving enough space to build the rest of the partitions you need. If you intend to make a Windows-shared partition (exfat, fat32, or NTFS), it is probably best to put that partition either first or just behind the EFI partition so that Windows systems won’t have a hard time finding it. Exfat or NTFS would be a better choice for this type of partition.

I would still generally recommend keeping the live distros on a separate bootable drive, but you can size and reserve dummy partitions after the rest of your normal dual-boot installs and shared data partitions for live installers to overwrite. There is likely going to be some experimentation with getting the OS bootloader (such as on GRUB provided by Fedora in this case) to pick them up and add them as boot entries. You should (depending on the live image) be able to dd write them to the ending partitions reserved for the image for as long as the partition is sized equal or larger than the ISO image’s size (it’s best to give at least a few blocks of oversize on the partition when writing ISO’s directly).

Edit: In a proper Fedora install, you should almost never need to disable selinux or set it to permissive unless you know why you don’t want it.

For the longest time reading this post, I didn’t catch that you were setting up a simple dual boot for an internal SSD and thought with using tools like Ventoy you were making a multiboot portable install.

You are obscenely overcomplicating this. Your current approach is almost completely wrong to getting a functional multiboot system that passes secure boot and everything else.

Quite literally, bootstrapping from windows can use Rufus or ventoy on a USB stick to dump the ISOs on. Then boot into bios from the USB EFI entry. From there, simply install Fedora (no VM necessary). You’ll get Fedora installed in a GPT/EFI configuration (if you formatted your drive for install). If doing manual partitioning to leave space or do other configurations, format the drive to GPT. If multibooting, you may want to expand your EFI partition beyond 512MiB for multiple distros.

For other Linux OS to install alongside, you can then install them in the free space. Another comment mentioned to not install a bootloader on the secondary OS(es), which is generally a good idea.

For Tails, it is not meant to be installed on an SSD. It is best to use it on a flash drive.

Overall, a majority of your issues stem from the following:

- trying to use live distro images as an actual OS install

- using Ventoy as your bootloader

- using legacy MBR partition tables instead of GPT without expressed need for them

- Using virtualbox in general to provision bare metal hardware (changes need to be made in your VM software of choice to get EFI booting to work)

I’d argue your conclusion of people not switching to Linux because you found it too hard is almost entirely not because of any issue on Linux, but the factors you wedged yourself into on a modern x86 PC due to your methods in accomplishing your goal.

The username release is quite recent for those not participating in beta versions of Signal.

This is render offloading, not GPU switching. GPU switching implies switching the primary rendering device (the one power the displays) entirely rather than rendering on a separate GPU and copying the output to the primary.

I’m glad I am not the only one who calls my little ASUS netbook craptop. Kinda flimsy and definitely underpowered, but a perfect little device to run basic applications and terminal applications on a minimal window manager.

In general, Microsoft doesn’t support many filesystem formats at all. In the same way you shouldn’t attempt to cross-run a steam library from Windows on Linux, you really shouldn’t do from Linux to Windows. It’s in part due to how permissions, execution flags, filesystem case sensitivity, file metadata, is interpreted by Windows applications vs. Unix-like applications. There will be issues going either way when using foreign filesystems in complex tasks.

While it should be expected that the files will have the same contents where they are actually the same (i.e. a Proton game will be the same as a Windows game as it comes from the same steam depot), there is a good chance that translation of interpretation isn’t to be 1:1 on either side. Furthermore with using Steam libraries, Steam includes additional data beyond just the game files, which is likely why they are invalidated. A significant portion of visible cross-os portability issues is due to many applications like Steam using OS-specific file structures. More than likely Steam is going to intentionally make the library metadata not fully compatible between Linux and Windows Steam and force validation before launch because there is a good chance the games aren’t even compatible builds or otherwise have additional compatibility content dragged along (such as Proton WINE prefixes that are to be completely ignored when launching from actual Windows or having additional libraries, modded executable binaries that have platform-oriented patches).

If you seriously want to run a cross-share of a Steam Library between Linux and Windows, you should really utilize Steam Cloud save. If you want to “deduplicate” your games, your best bet would be if you can open the foreign fs and have a compatible copy of the game, to simply clone the game files to the current filesystem and remove from remote rather than attempt to force a multi-os single-partition shared library. You are less likely to destroy your Steam library if you treat the actual libraries separate, but move the games like they were downloaded externally. Barring being able to do that, just don’t cross-share games. Simply reboot into the OS that has the game you want to play instead.

One can comfortably use NTFS to read and write files on modern Linux distributions, but NTFS in general is not very suitable for running applications on or using for long-term usage between a dual-boot. Windows can and will often lock up NTFS partitions whenever it decides to hibernate rather than shutdown or sometimes suspend. NTFS while not being the greatest FS in general will also have worse performance on Linux than Windows. You can totally keep data stores on a Linux system, though you won’t be able to make use of many of the advanced features some Linux/BSD-oriented filesystems offer. You can totally keep your drives as they are now, though if you intend to make a full switch you should consider migrating your drives’ data over to more Linux-oriented filesystems (be it Btrfs, Xfs, or Ext4 is your choice depending on the features you want). In short, NTFS works but lacks a lot of features and performance that a more suitable filesystem would offer.

Yes, just make sure that the boot setup for the distro install is compatible with what you intend to install it onto (I.E. if your server is going to be using EFI to boot an OS, install your Ubuntu instance as GPT, EFI onto the SSD). Depending on what wireless modules you are using and where you are sourcing them and how you are installing them, you might need to ensure Secure Boot is disabled in the BIOS of your server. This will be the case if the kernel module package you are installing doesn’t sign the wireless adapter driver you intend to use. Otherwise, most drivers you could possibly need should be baked into the kernel and you should be good to go.

(One further sidenote coming from someone who has not used Ubuntu in a long time (since 16.04’s release), it would be good to check in the /etc/fstab file that the filesystem references are using either UUID or PARTUUID. Depending upon the drive layout of the server you are mounting the intended drive into, traditionally labeled references such as sda or nvme0n1 can change depending upon the slots each drive is seated. Using UUID or PARTUUID in the fstab reference alleviates any potential complications from this scenario where fstab might reference the wrong drive in mounting partitions. I do believe Ubuntu would likely do this by default nowadays, but it can’t hurt to check.)

I’d imagine mpd with one of many frontends would work well enough. You’d just need to use a dummy music library directory with symlinks to your four music storages for mpd to pick up and catalog everything.