- 8 Posts

- 59 Comments

Do you ever make things harder for people around you intentionally? If so, why? If not, why do you think do that against you?

1·21 days ago

1·21 days agoare you familiar with left-wing blockchain and that whole strand of research or you just talk because you have no clue about the fact that there’s always been plenty of anti-capitalist and post-capitalist in the blockchain scene?

11·21 days ago

11·21 days agoHave you watched the video or just stopped at the title?

1·22 days ago

1·22 days agoThere’s a lot of lefitsts spaces in the blockchain. While they are minoritarian, they have a distinct political agenda and set of values, separate from most of the web3 world. They either envision the usage of blockchain for local economies (an evolution of circular economy and local currencies that were popular in the 90’s and 2000s), or more global scale realignment of incentives, either through socialist market economies or more planning-oriented solutions.

4·27 days ago

4·27 days agoI know people that occupied the offices. They were perfectly aware they would be fired and the people selected for the action were the least vulnerable economically, because retaliation was certain. Anything else is journalistic spin.

121·1 month ago

121·1 month agoyou use “luddite” as if it’s an insult. History proved luddites were right in their demands and they were fighting the good fight.

42·1 month ago

42·1 month agowe do, and anybody telling you “it’s complicated” has an agenda.

88·2 months ago

88·2 months agoPlease yankee, don’t make everything happening in the world about you

2·2 months ago

2·2 months agoGnosticism is by definition the epitome of duality. That said, conflict with a reactionary entity doesn’t imply you’re not reactionary. Russia and Ukraine are at war with each other and they are both very reactionary, becoming even worse due to the needs produced by such conflict.

Also, hackers tend to hold libertarian (in the European sense) values and that’s how they pick their targets for direct action. When I say they are reactionary, they are reactionary in effect, not in intent. That makes them even more problematic, because it’s not immediately obvious what’s the problem.

4·2 months ago

4·2 months agoIt would be quite a long argument, but I suggest TechGnosis by Erik Davis and this article: https://www.are.na/block/24206425

tl;dr: hacker culture is grounded in gnostic, individualistic californian hippie culture, and shares root with what is now the dominant, reactionary ideology of big tech moguls, ketamine cryptocolonialists, business white supremacists. One key tenet of hacker culture is the power of the individual super-human brain power to reshape entire societies through the production of disruptive technology. Mr. Robot tv series is one such example of said mindset. It preaches the superiority of the world of minds and the virtual over the material. The material is subject to the virtual and the virtual is where the real stuff is happening, where there’s a real confrontation of power (the hacker vs the system, disruptors vs established businesses, out-of-the-box thinkers vs corporate drones). This mimics gnostic beliefs very closely. It is reactionary because it is individualistic, because it erases material conditions and collective action, but it also just operates from such a simplified worldview that it is impossible to adhere to if you have a very basic understanding of disciplines like sociology, history or politics. It’s just not how the world works.

36·2 months ago

36·2 months agoI have a few. I’m not the kind of person that says controversial things to attract attention, but I also don’t refrain from putting them out there.

A selection of the ones I use in my political activity:

- knowing things doesn’t change things

- work should be abolished

- atheism and rationalism are a scourge on the ability of the Left to reach people

- hacker culture is intrinsically gnostic and reactionary

Some others:

- suicidal and self-harming people should be listened to by understanding and validating the motivations behind their desire to hurt or kill themselves, even entertaining with them their own plans. Anything else would likely put a wedge between the two of you that will prevent from addressing the causes and ultimately do what’s good for them.

- mathematics is just narrative with rules/arbitrary opinions with rules

- nurses, doctors, teachers and other professions of care attract the worst psychopaths because they are put in charge of vulnerable people. On top of that they are by default perceived as caregivers, so it’s harder for them to raise suspicion of doing fucked up stuff.

Edit: people down voting in a thread about controversial opinions must be very very intelligent

4·2 months ago

4·2 months agoWe: Italians, Spanish, Greeks, Arabs, Turks, Vietnamese, South Asians, Japanese.

Barbarians: everybody else, especially the French

3·2 months ago

3·2 months agoYou clearly haven’t met a Southern European. We divide the world in civilized ass washers and uncivilized smelly barbarians

3·2 months ago

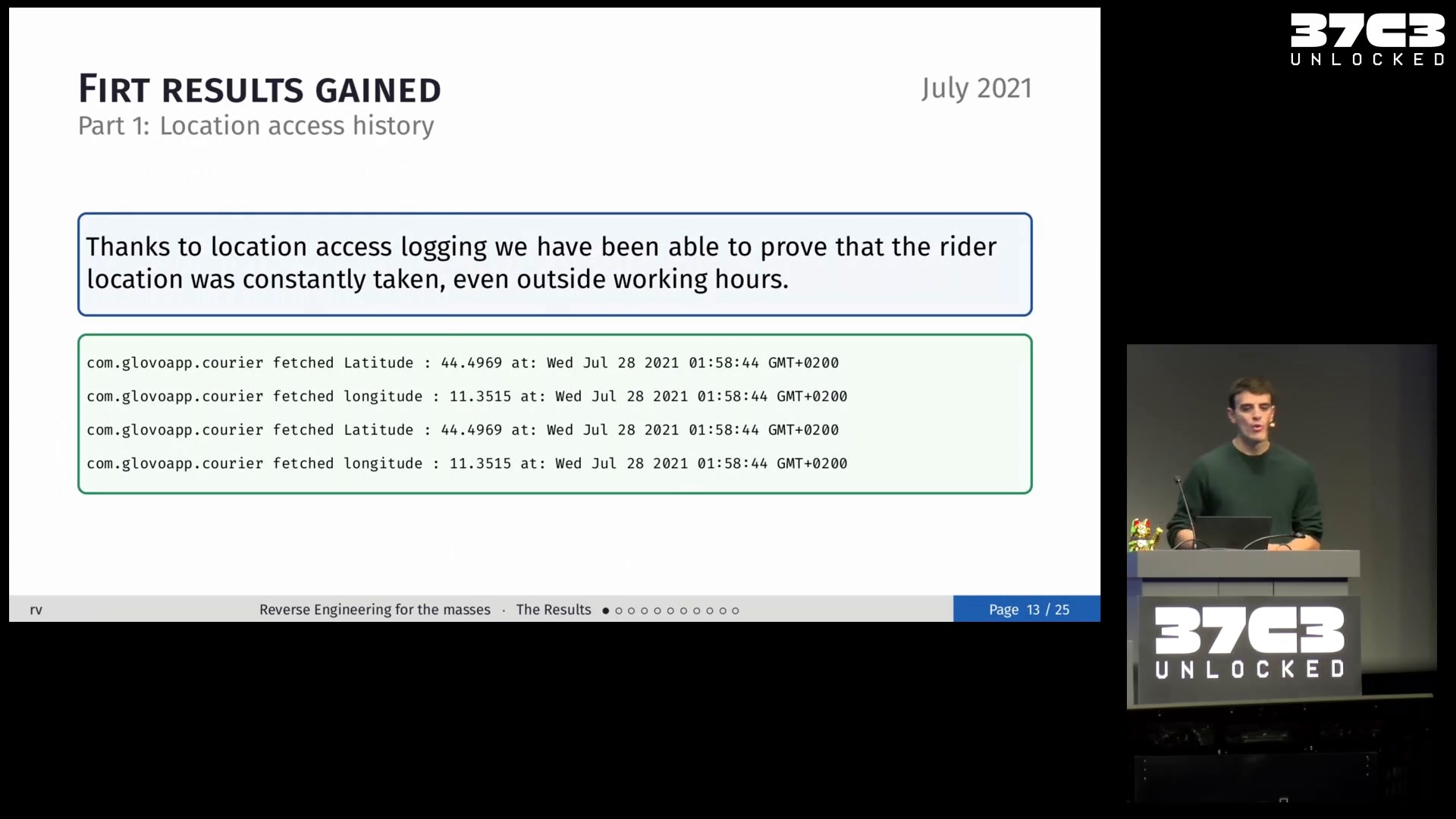

3·2 months agoI use Notion+Notion Calendar for this and I delegate to it a lot of stuff: bureaucracy, booking the barber, changing the bedsheets, all my work, birthdays, etc etc. How can people trust their brain with more than two or three items is unfathomable to me. I mean, when I was younger I could keep in mind a dozens upcoming appointments and go through them every few hours to make sure I wouldn’t miss anything, but as soon as your routine is disturbed by work stuff, it’s impossible.

8·2 months ago

8·2 months agoMonths? You clearly haven’t tried Pyanodons.

Jokes aside, yeah, it would be a killer.

3·2 months ago

3·2 months agomastercard sends your transaction data live to banks. They sell your data to third parties for marketing, profiling and the likes. Credit score is the least of your problems.

I know because I developed a system, in a major European bank, enriching their transaction data with mastercard data for live, predatory marketing.

nah, you will attract only those that already kinda agree. All the others will see weirdos with weird ideas, weird clothing and weird vocabulary, approaching them in the street or promoting events that they don’t care about.

“talking to people” is something I do since I’m in union organizing and the way people react to the same arguments varies wildly over time. After the waves of layoffs in the tech sector, non-politicized tech workers are incredibly more receptive to pro-union rhetoric, in a way that would have been impossible before.

About accelerationism: I’m not saying failing an election is a necessary step in a teleological sense. You should enter elections to win them, if you do it. Nonetheless it is useful to radicalize people. It is a recuperation of what is perceived as a defeat in a system in order to feed a different system. Electoral betrayal is useful, but not necessarily something you should strive for, as an armchair accelerationist would claim. There are better ways to spend your time and energy imho, but if it happens, it is still good manure for growing the seeds of something new.

46·2 months ago

46·2 months agoleftist might be a bit derogatory, but it’s not really an insult, come on.

254·2 months ago

254·2 months agoThe mistake of this logic is to believe that this betrayal of electoral logic won’t radicalize people. It is a necessary step. There are now 11 Million French people, many of which probably don’t believe much in electoralism but vote anyway, who are furious at what’s happening.

People don’t change their mind listening to arguments, they change their mind living experiences. The experience of joy after winning, followed by the disregard of democratic logic by Macron, will mobilize an insane amount of popular energy, contrary to snarky “electoralism doesn’t work” comments that are relatable only to a microscopic niche of edgy, maximalist leftists.

Vintage Story in solo, but I gave up because it’s too cumbersome to play without a team. Necesse with a team, lol. Mechabellum in solo multi AoW4 as a filler